If you make your own Stata programs and loops, you have discovered the wonders of automating output of analyses to tables. Extracting the results from regressions in Stata can be a bit cumbersome. Here’s one step-by-step approach that you might find helpful.

The set-up

Let’s use the classic 1978 auto dataset that comes with Stata. We want to regress MPG (Y) on weight (x) overall and by strata of domestic vs. foreign to complete the following table:

| Weight Beta (95% CI) | P-value | |

|---|---|---|

| All | ||

| Domestic | ||

| Foreign |

In Stata you’ll run three regressions to fill out the three rows:

sysuse auto, clear

regress mpg weight

regress mpg weight if foreign==0

regress mpg weight if foreign==1You can either copy the output manually, or automate it! Let’s learn how to automate this process.

Let’s get familiar with the ‘guts’ and ‘brains’ behind Stata’s regression functions.

When you run a regression, Stata saves relevant bits of these regressions in scalars and matrices saved in different r() and e() levels. You can view the r() ‘guts’ with -return list- and e() ‘brains’ with -ereturn list-. These have different uses.

- return list – This will give the ‘guts’ of the regression, namely the r() level bits, as you see it in the Stata output. Importantly, the r() level contains the r(table) matrix, which holds all of the raw numbers used to generate the output of your regression as you see it in Stata’s output. These are what you will use to fill out the above blank table.

- Type -matrix list r(table)- to see the structured output of this matrix.

- ereturn list – this will let you see the ‘brains’ behind the regression, namely the e() level bits, which are useful in post-estimation commands. We actually don’t need this to fill out the above table. Just pointing out that they’re here.

Let’s take a look at the regression output below and how they exist in the r() level r(table), I have bolded/underlined the output of interest. Our goal is to:

- Load the sysuse auto dataset.

- Run the regression.

- Take a look at the -return list- to see that the r(table) is hiding there (without actually viewing the contents of r(table))

- Actually view the r(table) matrix in order to verify that all of the data points of interest are hiding there.

. sysuse auto, clear . regress mpg weight Source | SS df MS Number of obs = 74 -------+---------------------------------- F(1, 72) = 134.62 Model | 1591.9902 1 1591.9902 Prob > F = 0.0000 Residu | 851.469256 72 11.8259619 R-squared = 0.6515 -------+---------------------------------- Adj R-squared = 0.6467 Total | 2443.45946 73 33.4720474 Root MSE = 3.4389 ------------------------------------------------------------------- mpg | Coef. Std. Err. t P>|t| [95% Conf. Interval] -------+---------------------------------------------------------------- weight | -.0060087 .0005179 -11.60 0.000 -.0070411 -.0049763 _cons | 39.44028 1.614003 24.44 0.000 36.22283 42.65774 ---------------------------------------------------------------------- . return list scalars: r(level) = 95 matrices: r(table) : 9 x 2 . matrix list r(table) r(table)[9,2] weight _cons b -.00600869 39.440284 se .00051788 1.6140031 t -11.60251 24.436312 pvalue 3.798e-18 1.385e-36 ll -.00704106 36.222827 ul -.00497632 42.65774 df 72 72 crit 1.9934636 1.9934636 eform 0 0

r(table) is a matrix, but an atypical matrix. Copy it to a custom ‘typical’ matrix before doing anything else!

Matrices are basically small spreadsheets saved in the memory that can be accessed by referencing a [row,column] cell reference. These exist separate from the dataset, which is also basically a big spreadsheet. You’ll note above (after the -matrix list r(table)- command) that Stata tells you that the r(table) matrix has 9 rows and 2 columns, or [9,2].

For various reasons that you can read about here, r(table) is not a usual matrix and Stata will do funny things if you try to run matrix commands on it. Make sure to save the r(table) matrix as custom matrix before going any further. Since we actually need to save 3 separate r(table) matrices to fill out the blank table (one for each row), you should do this anyway to help facilitate completing the table. Use the -matrix- command to copy the contents of the r(table) to a custom matrix. Here we’ll:

- Load the sysuse auto dataset

- Run three regressions, one for each row, and

- Save the r(table) matrix for each regression to a custom named matrix. We’ll specifically call them “row1”, “row2”, and “row3”.

- Then, we will confirm that each row is saved by plopping the command to view the matrices at the end.

sysuse auto, clear * first row regress mpg weight * save as a custom matrix matrix row1=r(table) * second row regress mpg weight if foreign==0 * save as a custom matrix matrix row2=r(table) * third row regress mpg weight if foreign==1 * save as a custom matrix matrix row3=r(table) * prove that all three saved matrix list row1 matrix list row2 matrix list row3

The stata output for the last three lines should look like the output below. Note that the beta coefficient is at [1,1], the 95% confidence interval bounds are at [5,1] and [6,1], and the p-value is at [4,1]. I bolded/underlined the first to highlight this.

. matrix list row1

row1[9,2]

weight _cons

b -.00600869 39.440284

se .00051788 1.6140031

t -11.60251 24.436312

pvalue 3.798e-18 1.385e-36

ll -.00704106 36.222827

ul -.00497632 42.65774

df 72 72

crit 1.9934636 1.9934636

eform 0 0

.

. matrix list row2

row2[9,2]

weight _cons

b -.00597508 39.646965

se .00046538 1.5766224

t -12.839251 25.146772

pvalue 1.890e-17 4.898e-30

ll -.00690982 36.480225

ul -.00504035 42.813704

df 50 50

crit 2.0085591 2.0085591

eform 0 0

.

. matrix list row3

row3[9,2]

weight _cons

b -.01042596 48.918297

se .00249417 5.8718507

t -4.1801326 8.3309845

pvalue .00046167 6.196e-08

ll -.0156287 36.669831

ul -.00522321 61.166763

df 20 20

crit 2.0859634 2.0859634

eform 0 0

Let’s extract the matrix components of interest as macros!

Macros are little ‘codewords’ that represent another variable or string. You can pluck a cell of a matrix and store it as a macro. Later on, use can use that ‘codeword’ associated with the macro to make Stata blurt out the stored cell result. We just need to point the macro at the right matrix cell in order to extract the cell’s results. It sounds confusing but it’s not. Remember the [row,column] numbers from above? We’ll use those numbers to extract the matrix cell results into macros. Next steps:

- Load the sysuse auto dataset

- Run a regression for the first three rows of our table, saving the r(table) matrix for each regression as our custom matrix (row1-3)

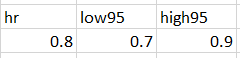

- Use macros to extract the [1,1] as beta coefficient, [5,1] and [6,1] as the 95% confidence intervals, and [4,1] as the p-value for each row.

- View each macro with the -display- opening tick (`), to the left of the number 1 on your keyboard, the macro name, and a closing apostrophe (‘).

- You can use number formatting like %3.2f (e.g., 0.56) or %4.3f (0.558) to limit the number of digits following the decimal. This will also round. (Note: If you want to use super fancy formatting of a P-value, see this post.)

Code to do this:

sysuse auto, clear

* first row

regress mpg weight

* save as a custom matrix

matrix row1=r(table)

* second row

regress mpg weight if foreign==0

* save as a custom matrix

matrix row2=r(table)

* third row

regress mpg weight if foreign==1

* save as a custom matrix

matrix row3=r(table)

****

*now save the beta, 95% ci, and P as macros

*row 1

local beta1=row1[1,1]

local low951=row1[5,1]

local high951=row1[6,1]

local pval1=row1[4,1]

*row 2

local beta2=row2[1,1]

local low952=row2[5,1]

local high952=row2[6,1]

local pval2=row2[4,1]

*row 3

local beta3=row3[1,1]

local low953=row3[5,1]

local high953=row3[6,1]

local pval3=row3[4,1]

*now view these

*row 1

di "row1 beta is " %3.2f `beta1'

di "row1 95% CI is " %3.2f `low951' " to " %3.2f `high951'

di "row1 P-val is " %4.3f `pval1'

*row 2

di "row2 beta is " %3.2f `beta2'

di "row2 95% CI is " %3.2f `low952' " to " %3.2f `high952'

di "row2 P-val is " %4.3f `pval2'

*row 3

di "row3 beta is " %3.2f `beta3'

di "row3 95% CI is " %3.2f `low953' " to " %3.2f `high953'

di "row3 P-val is " %4.3f `pval3'

Hide in -quietly- curly brackets and output a CSV file using -noisily- commands and a log!

Here’s my code to run the three regression, store the r(table) matrices, extract the data of interest, and output as a .csv file! Run this from a .do file as it includes the -quietly- command, which confuses Stata if it’s run from the command line.

sysuse auto, clear

* first row

regress mpg weight

* save as a custom matrix

matrix row1=r(table)

* second row

regress mpg weight if foreign==0

* save as a custom matrix

matrix row2=r(table)

* third row

regress mpg weight if foreign==1

* save as a custom matrix

matrix row3=r(table)

****

*view the custom matrices to prove they worked

matrix list row1

matrix list row2

matrix list row3

****

*now save the beta, 95% ci, and P as macros

*here's a loop that automates this a bit

foreach x in 1 2 3 {

local beta`x'=row`x'[1,1]

local low95`x'=row`x'[5,1]

local high95`x'=row`x'[6,1]

local pval`x'=row`x'[4,1]

}

pwd // this is where your CSV file is saved

quietly {

capture log close table // always good to close any open logs

log using "regressiontable.csv", replace text name(table)

*header

noisily di ",Weight beta (95% CI),P-value"

*row 1

noisily di "All," %3.2f `beta1' " (" %3.2f `low951' " to " %3.2f `high951' ")," %4.3f `pval1'

*row 2

noisily di "Domestic," %3.2f `beta2' " (" %3.2f `low952' " to " %3.2f `high952' ")," %4.3f `pval2'

*row 3

noisily di "Foreign," %3.2f `beta3' " (" %3.2f `low953' " to " %3.2f `high953' ")," %4.3f `pval3'

log close table

}Boom! you’ll get a CSV file that looks like this, which should be simple to import in Excel!

,Weight beta (95% CI),P-value

All,-0.01 (-0.01 to -0.00),0.000

Domestic,-0.01 (-0.01 to -0.01),0.000

Foreign,-0.01 (-0.02 to -0.01),0.000

You’ll notice that these numbers are small, so you may want to use %4.3f instead of %3.2f to get 3 digits past the decimal place for the beta and 95% CIs.

Bonus: Generating a CSV file with pretty P-values

Here’s the same code, but using the “pretty” p-value code documented in this separate post.

sysuse auto, clear

* first row

regress mpg weight

* save as a custom matrix

matrix row1=r(table)

* second row

regress mpg weight if foreign==0

* save as a custom matrix

matrix row2=r(table)

* third row

regress mpg weight if foreign==1

* save as a custom matrix

matrix row3=r(table)

****

*view the custom matrices to prove they worked

matrix list row1

matrix list row2

matrix list row3

****

*now save the beta, 95% ci, and P as macros

*here's a loop that automates this a bit

foreach x in 1 2 3 {

local beta`x'=row`x'[1,1]

local low95`x'=row`x'[5,1]

local high95`x'=row`x'[6,1]

local pval`x'=row`x'[4,1]

local pval = r(table)[4,1]

if `pval`x''>=0.056 {

local pvalue`x' "P=`: display %3.2f `pval`x'''"

}

if `pval`x''>=0.044 & `pval'<0.056 {

local pvalue`x' "P=`: display %5.4f `pval`x'''"

}

if `pval`x'' <0.044 {

local pvalue`x' "P=`: display %4.3f `pval`x'''"

}

if `pval`x'' <0.001 {

local pvalue`x' "P<0.001"

}

if `pval`x'' <0.0001 {

local pvalue`x' "P<0.0001"

}

di "original P is " `pval`x'' ", formatted is " "`pvalue`x''"

}

pwd // this is where your CSV file is saved

quietly {

capture log close table // always good to close any open logs

log using "regressiontable.csv", replace text name(table)

*header

noisily di ",Weight beta (95% CI),P-value"

*row 1

noisily di "All," %3.2f `beta1' " (" %3.2f `low951' " to " %3.2f `high951' ")," "`pvalue1'"

*row 2

noisily di "Domestic," %3.2f `beta2' " (" %3.2f `low952' " to " %3.2f `high952' ")," "`pvalue2'"

*row 3

noisily di "Foreign," %3.2f `beta3' " (" %3.2f `low953' " to " %3.2f `high953' ")," "`pvalue3'"

log close table

}Here’s the output:

,Weight beta (95% CI),P-value

All,-0.01 (-0.01 to -0.00),P<0.0001

Domestic,-0.01 (-0.01 to -0.01),P<0.0001

Foreign,-0.01 (-0.02 to -0.01),P<0.001